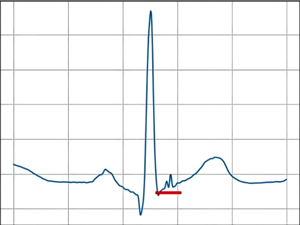

Classification of low-amplitude ECG components using adaptive activation functions of neural networks

DOI:

https://doi.org/10.3103/S0735272724120021Keywords:

electrocardiography, neural network, adaptive activation function, ventricular late potentials, VLP, atrial late potentials, ALP, problem of exploding gradients, problem of vanishing gradientsAbstract

A promising direction in the development of neural networks for the analysis and classification of biomedical signals is the use of trainable activation functions, known as AAFs (Adaptive Activation Functions). The use of such functions enables heterogeneous data to be adapted, thereby improving classification accuracy. This paper considers the application of AAFs for the classification of low-amplitude components of electrocardiogram (ECG), specifically ventricular late potentials (VLP) and atrial late potentials (ALP), which are important for the early detection of cardiac tachyarrhythmias. To evaluate the impact of AAF on the quality of VLP and ALP detection, two fully connected neural networks with different numbers of hidden layers were developed. The study established that using AAF increases the accuracy of VLP and ALP classification and the speed of neural network model training compared to non-adaptive activation functions. To minimize the problems of “vanishing” or “exploding” gradients in the loss function, as well as the effects of “dead” neurons that arise during neural network training, a new activation function has been developed that normalizes weight coefficients, preventing excessively high or low gradients. Using the developed activation function increases the speed and stability of neural network training. It improves the recognition accuracy of low-amplitude ECG components compared to other activation functions. Using the developed AAF, the highest classification accuracy was obtained for VLP (94.7%) and ALP (91.4%). To simultaneously analyze a large number of activation functions, a coefficient was developed to assess the redundancy of network layers. The proposed coefficient for detecting “bottlenecks” in neural network architectures significantly simplifies the analysis and improvement of neural networks.

References

- D. K. Shreyas, N. J. Srivatsa, V. H. Kumar, V. Venkataramanan, C. S. Kaliprasad, “A review on neural networks and its applications,” J. Comput. Technol. Appl., vol. 14, no. 2, pp. 46–51, 2023, doi: https://doi.org/10.37591/jocta.v14i2.1062.

- Z. Jiang, Y. Wang, C.-T. Li, P. Angelov, R. Jiang, “Delve into neural activations: toward understanding dying neurons,” IEEE Trans. Artif. Intell., vol. 4, no. 4, pp. 959–971, 2023, doi: https://doi.org/10.1109/TAI.2022.3180272.

- I. D. Mienye, T. G. Swart, G. Obaido, “Recurrent neural networks: a comprehensive review of architectures, variants, and applications,” Information, vol. 15, no. 9, p. 517, 2024, doi: https://doi.org/10.3390/info15090517.

- Y. Wang, A. Vinogradov, “Simple is good: Investigation of history-state ensemble deep neural networks and their validation on rotating machinery fault diagnosis,” Neurocomputing, vol. 548, p. 126353, 2023, doi: https://doi.org/10.1016/j.neucom.2023.126353.

- K. V. Zaichenko, A. A. Kordyukova, D. L. Sonin, M. M. Galagudza, “Ultra-high-resolution electrocardiography enables earlier detection of transmural and subendocardial myocardial ischemia compared to conventional electrocardiography,” Diagnostics, vol. 13, no. 17, p. 2795, 2023, doi: https://doi.org/10.3390/diagnostics13172795.

- S. Bouraya, A. Belangour, “A comparative analysis of activation functions in neural networks: unveiling categories,” Bull. Electr. Eng. Informatics, vol. 13, no. 5, pp. 3301–3308, 2024, doi: https://doi.org/10.11591/eei.v13i5.7274.

- B. Çatalbaş, Ö. Morgül, “Deep learning with extendeD Exponential Linear Unit (DELU),” Neural Comput. Appl., vol. 35, no. 30, pp. 22705–22724, 2023, doi: https://doi.org/10.1007/s00521-023-08932-z.

- G. Wan, L. Yao, “LMFRNet: a lightweight convolutional neural network model for image analysis,” Electronics, vol. 13, no. 1, p. 129, 2023, doi: https://doi.org/10.3390/electronics13010129.

- D. Yang, K. M. Ngoc, I. Shin, M. Hwang, “DPReLU: dynamic parametric rectified linear unit and its proper weight initialization method,” Int. J. Comput. Intell. Syst., vol. 16, no. 1, p. 11, 2023, doi: https://doi.org/10.1007/s44196-023-00186-w.

- J. Inturrisi, S. Y. Khoo, A. Kouzani, R. Pagliarella, “Piecewise linear units improve deep neural networks,” 2021, uri: http://arxiv.org/abs/2108.00700.

- K. Biswas, S. Kumar, S. Banerjee, A. K. Pandey, “ErfAct and Pserf: non-monotonic smooth trainable activation functions,” Proc. AAAI Conf. Artif. Intell., vol. 36, no. 6, pp. 6097–6105, 2022, doi: https://doi.org/10.1609/aaai.v36i6.20557.

- H. Chung, S. J. Lee, J. G. Park, “Deep neural network using trainable activation functions,” in 2016 International Joint Conference on Neural Networks (IJCNN), 2016, pp. 348–352, doi: https://doi.org/10.1109/IJCNN.2016.7727219.

- N. Strodthoff et al., “PTB-XL+, a comprehensive electrocardiographic feature dataset,” Sci. Data, vol. 10, no. 1, p. 279, 2023, doi: https://doi.org/10.1038/s41597-023-02153-8.

- A. V. Mnevets, N. H. Ivanushkina, “The method of preprocessing of ECG signals for detection of atrial and ventricular late potentials,” Microsystems, Electron. Acoust., vol. 28, no. 2, 2023, doi: https://doi.org/10.20535/2523-4455.mea.281741.

- S. Huang, Q. Wu, “Robust pairwise learning with Huber loss,” J. Complex., vol. 66, p. 101570, 2021, doi: https://doi.org/10.1016/j.jco.2021.101570.

- J. Jachymski, I. Jóźwik, M. Terepeta, “The Banach fixed point theorem: selected topics from its hundred-year history,” Rev. la Real Acad. Ciencias Exactas, Físicas y Nat. Ser. A. Matemáticas, vol. 118, no. 4, p. 140, 2024, doi: https://doi.org/10.1007/s13398-024-01636-6.