Fourth moment and its functional transformations as measures of clipping degree and quality of acoustic signal

DOI:

https://doi.org/10.3103/S0735272721050046Keywords:

clipping, kurtosis, speech signal, music signalAbstract

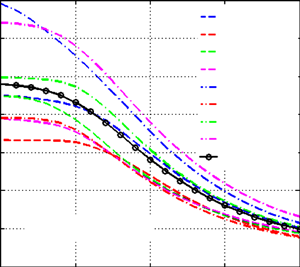

It is shown that fourth standardized moment (kurtosis) and its some functional transformations (inverse value, square root of inverse value) can be objective measures of clipping and quality of speech and music signals. The essential advantages of suggested measures are no need for previous estimation of probability density of analyzed signal as well as no need for information about undistorted signals. Indices of correlation were calculated and correspondence maps were created that represent relationships between estimations of suggested measures and subjective quality evaluations of clipped sound signals, which enables calibration of objective measures. It was shown that values correspondence maps, which are simple functional transformations of kurtosis, can be approximated by polynomials of the first or second order, whereas for the approximation of correspondence maps of kurtosis the polynomials of the fourth order are needed. This fact in combination with interval limitations of possible values of used measures means that in engineering applications the applying of kurtosis functional transformation can be preferable. Suggested measures were compared to different measures represented by clipping factor. The clipping factor was shown to be less effective compared to suggested measures under conditions of high clipping level of speech and music signals.

References

T. Otani, M. Tanaka, Y. Ota, S. Ito, “Clipping detection device and method,” US Patent 8,392,199 B2, 2013.

G. R. Avanesyan, “Method and device for estimation and indication of output signal distortion of audio frequency amplifier (overload indication),” SU Patent 2274868, 2006.

X. Liu, J. Jia, L. Cai, “SNR estimation for clipped audio based on amplitude distribution,” in 2013 Ninth International Conference on Natural Computation (ICNC), 2013, pp. 1434–1438, doi: https://doi.org/10.1109/ICNC.2013.6818205.

S. V. Aleinik, Y. N. Matveev, A. Raev, “Evaluation method of speech signal clipping level,” Sci. Tech. J. Inf. Technol. Mech. Opt., no. 3, pp. 79–83, 2012, uri: https://ntv.ifmo.ru/en/article/776/metod_ocenki_urovnya_klippirovaniya_rechevogo_signala.htm.

S. V. Aleinik, Y. N. Matveev, A. V. Sholokhov, “Detection of clipped fragments in acoustic signals,” Sci. Tech. J. Inf. Technol. Mech. Opt., vol. 14, no. 4, pp. 286–292, 2014, uri: https://ntv.ifmo.ru/en/article/10352/opredelenie_klippirovannyh_fragmentov_v_akusticheskih_signalah.htm.

F. Bie, D. Wang, J. Wang, T. F. Zheng, “Detection and reconstruction of clipped speech for speaker recognition,” Speech Commun., vol. 72, pp. 218–231, 2015, doi: https://doi.org/10.1016/j.specom.2015.06.008.

C. Laguna, A. Lerch, “An efficient algorithm for clipping detection and declipping audio,” AES 141st Conv., 2016, uri: http://www.aes.org/e-lib/browse.cfm?elib=18486.

A. H. Poorjam, J. R. Jensen, M. A. Little, M. G. Christensen, “Dominant distortion classification for pre-processing of vowels in remote biomedical voice analysis,” in Interspeech 2017, 2017, pp. 289–293, doi: https://doi.org/10.21437/Interspeech.2017-378.

M. Kendall, A. Stuart, The Advanced Theory of Statistics: Distribution Theory. London: Wiley, 1977.

A. M. Prodeus, I. V. Kotvytskyi, A. A. Ditiashov, “Assessment of clipped speech quality,” Electron. Control Syst., vol. 4, no. 58, p. 0, 2018, doi: https://doi.org/10.18372/1990-5548.58.13504.

J. J. A. Moors, “The meaning of kurtosis: Darlington reexamined,” Am. Stat., vol. 40, no. 4, pp. 283–284, 1986, doi: https://doi.org/10.1080/00031305.1986.10475415.

V. Arora, R. Kumar, “Probability distribution estimation of music signals in time and frequency domains,” in 2014 19th International Conference on Digital Signal Processing, 2014, pp. 409–414, doi: https://doi.org/10.1109/ICDSP.2014.6900696.

G. Li, M. E. Lutman, S. Wang, S. Bleeck, “Relationship between speech recognition in noise and sparseness,” Int. J. Audiol., vol. 51, no. 2, pp. 75–82, 2012, doi: https://doi.org/10.3109/14992027.2011.625984.

N. Côté, Integral and Diagnostic Intrusive Prediction of Speech Quality. Berlin, Heidelberg: Springer Berlin Heidelberg, 2011, doi: https://doi.org/10.1007/978-3-642-18463-5.

S. Naida, V. Didkovskyi, O. Pavlenko, N. Naida, “Spectral analysis of sounds by acoustic hearing analyzer,” in 2019 IEEE 39th International Conference on Electronics and Nanotechnology (ELNANO), 2019, pp. 421–424, doi: https://doi.org/10.1109/ELNANO.2019.8783915.

V. S. Didkovskyi, S. A. Naida, “Building-up principles of auditory echoscope for diagnostics of human middle ear,” Radioelectron. Commun. Syst., vol. 59, no. 1, pp. 39–46, 2016, doi: https://doi.org/10.3103/S0735272716010039.